March 16, 2016 · 7 min read

Large Data with Scikit-learn - Boston Meetup

By Alexis Perrier

Large Data with Scikit-learn

-

Alexis Perrier - @alexip

-

Data & Software - @BerkleeOnline - Day

-

Data Science contributor - @ODSC - Night

Plan

- What is large data?

out-of-core, streaming, online, batch?

-

Algorithms

-

Implementation

-

Examples

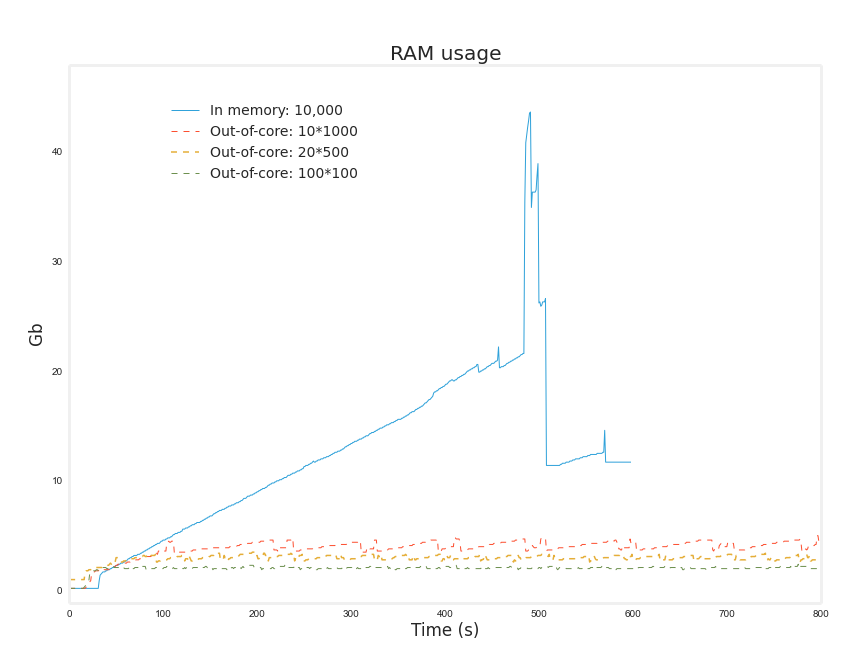

Cannot fit in the main memory (RAM)

2-10Gb makes the computer swap too much. Slow!

From in-memory to on-disk

- Being able to work with data that fits on disk on a single machine with no cluster

- Dato: GraphLab create

- H20.ai

- memory optimized AWS instances

- pySpark MLlib

- Text: Gensim [py]

- R: SGD streaming package

- Out-of-core / External memory

Data does not fit in main memory => access data stored in slow data store (disk, ...), slower (10x, 100x)

-

Offline: all the data is available from the start

-

Online: Serialized. Same results as offline. Act on new data right away

-

Streaming Serialized. limited number of passes over the data, can postpone processing. "old" data is of less importance.

-

Minibatch, Serialized in blocks

-

Incremental = online + minibatch

clf = Some Model or Transformation

- Train on training data X_train, y_train

clf.fit(X_train,y_train)

- Predict on test data: X_test => y_test

y_test = clf.predict(X_test)

- Assess model performance on test: y_truth, vs y_test

clf.score(y_truth, y_test, metric = ...)

- Predict on new data: \( \hat{y} = clf.predict(X_{new}) \)

-

Split the training data in blocks: mini-batch (sequential, random, ...)

-

Load each block in sequence

-

Train the model adaptively on each block

-

Convergence!

- Batch size n

- split the data in N blocks

- .partial_fit instead of .fit

clf.partial_fit(X_train(i),y(i), all_classes)

- All possible classes given to partial_fit on first call

- i = 1..N number of blocks

II Algorithms

| Regression | Classification | |

| Stochastic Gradient (1) | X | X |

| Naive Bayes (2) | X |

(1) SG + PassiveAggressive + Perceptron (clf only)

(2) NB: MultinomialNB, BernoulliNB

| Clustering | Decomposition | |

| MiniBatchKMeans | X | X |

| DictionaryLearning, PCA | X |

<script type="text/template">

<!-- .slide: data-background="#FFFFFF" -->

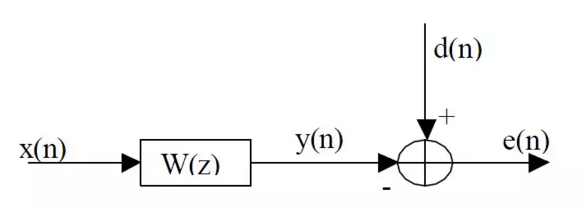

Stochastic Gradient - Wiener filtering

Gradient Descent solves

Deterministic!

<script type="text/template">

<!-- .slide: data-background="#FFFFFF" -->

SGD / LMS:

Approximate the gradient one sample at a time \( x_i, y_i \)

- Slow convergence

- Poor approximation of Gradient => oscillations

Mini-batch SGD / Block-LMS

N sample at a time to estimate the gradient

Less gradient noise, better convergence than SGD, still very optimizable

- GD: loss = MSE

- Passive Aggressive: Hinge loss

- Perceptron: loss = +/- 1

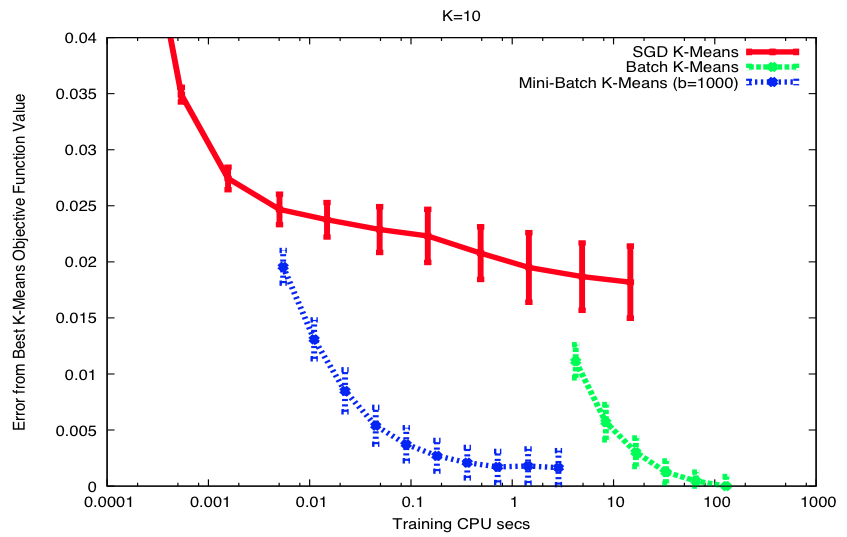

D. Sculley @Google, 2010, Web Scale K-Means Clustering

- Each mini-batch

- Each sample \( x \) in the batch

- Find closes center \( C_{x} \) (min dist)

- Each sample \( x \) in the batch

- Update \( C_{x} \) with

- Each sample \( x \) in the batch

Same convergence level

-

Classification: Mini-batches need to be balanced for classes

-

Perceptron, PA and SGD put different emphasis on samples over time.

-

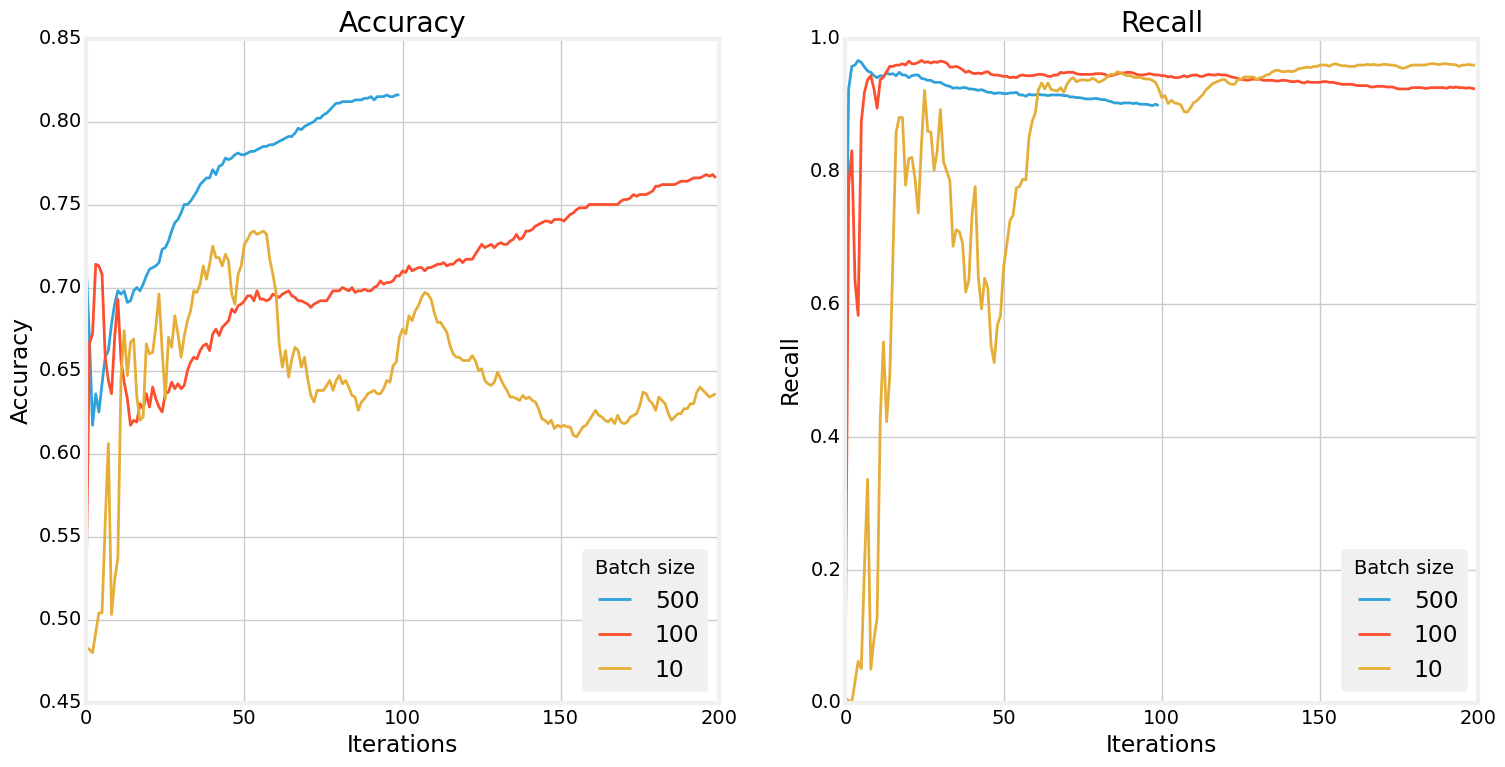

Batch size influences?

- time efficiency of training (small batch) VS noisiness of gradient estimate (large batch)

Adding some noise (small batch) can be interesting to get out of local minimas

In practice

- small to moderate mini-batch sizes (10-500)

- decaying learning rate

III Examples

Not same partition as K-Means, but much faster

Same convergence level

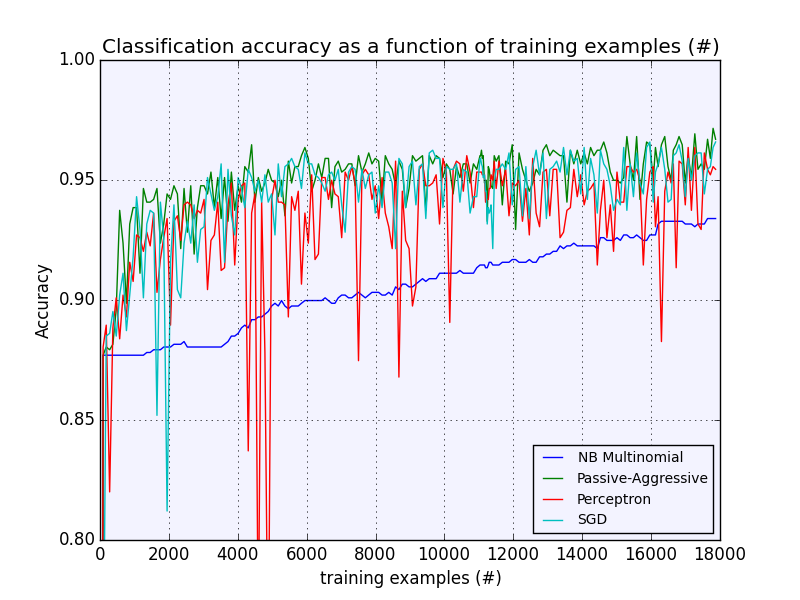

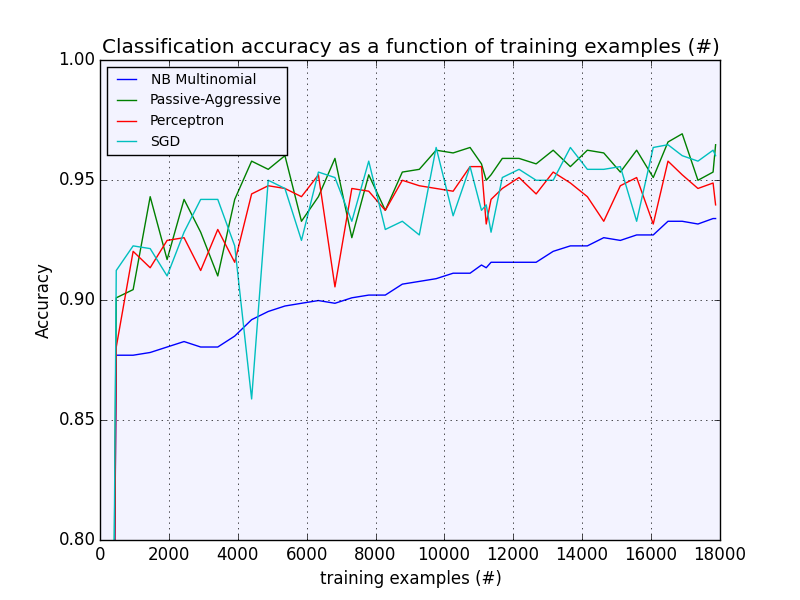

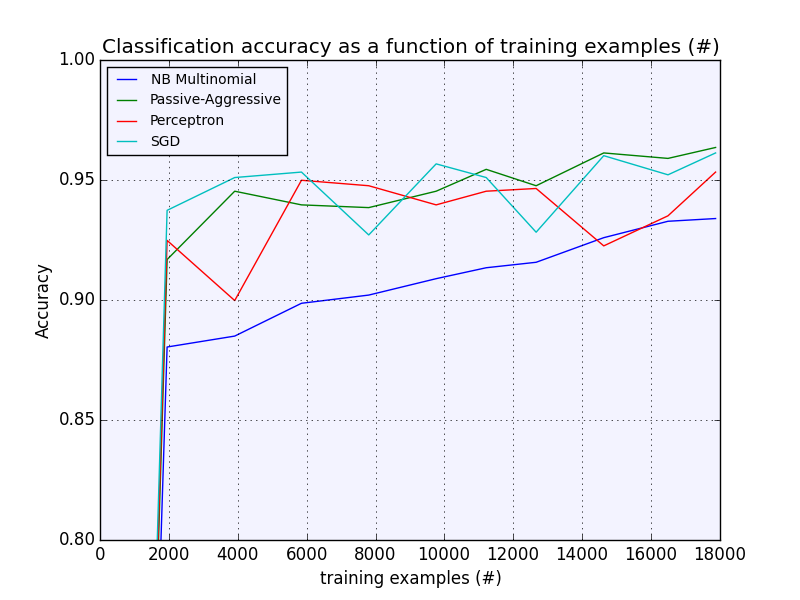

Batch sizes: 100, 500, 2000

Accuracy results are similar

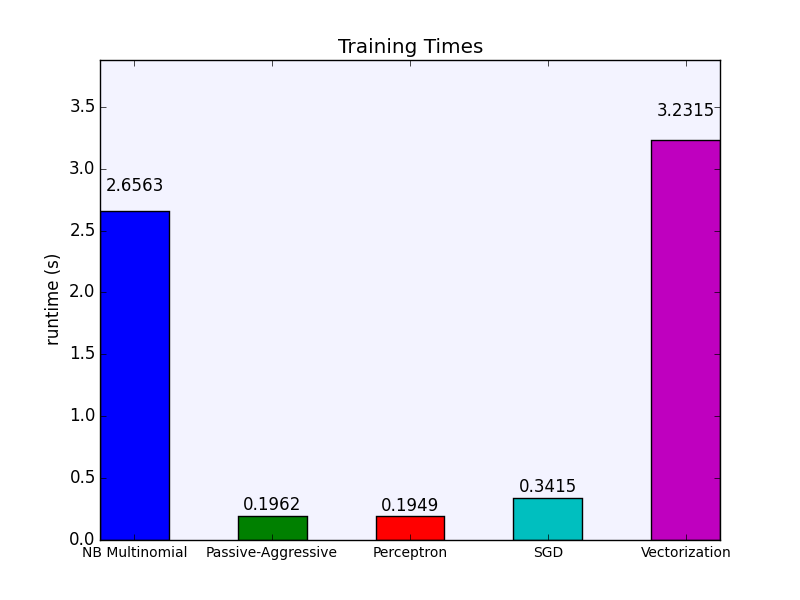

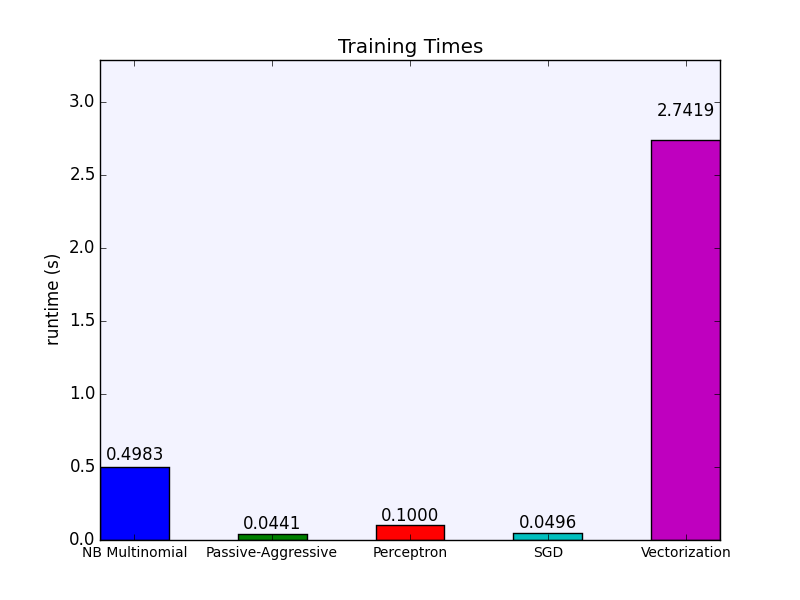

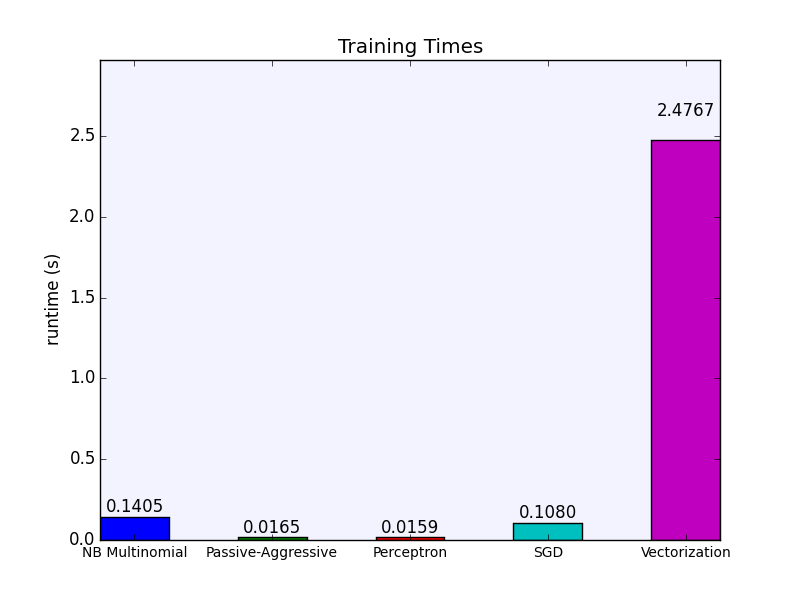

Batch sizes: 100, 500, 2000

Training times decreases for modelsStays basically the same for vectorization

feature extraction, vectorization: TfIdf / Hashing Trick

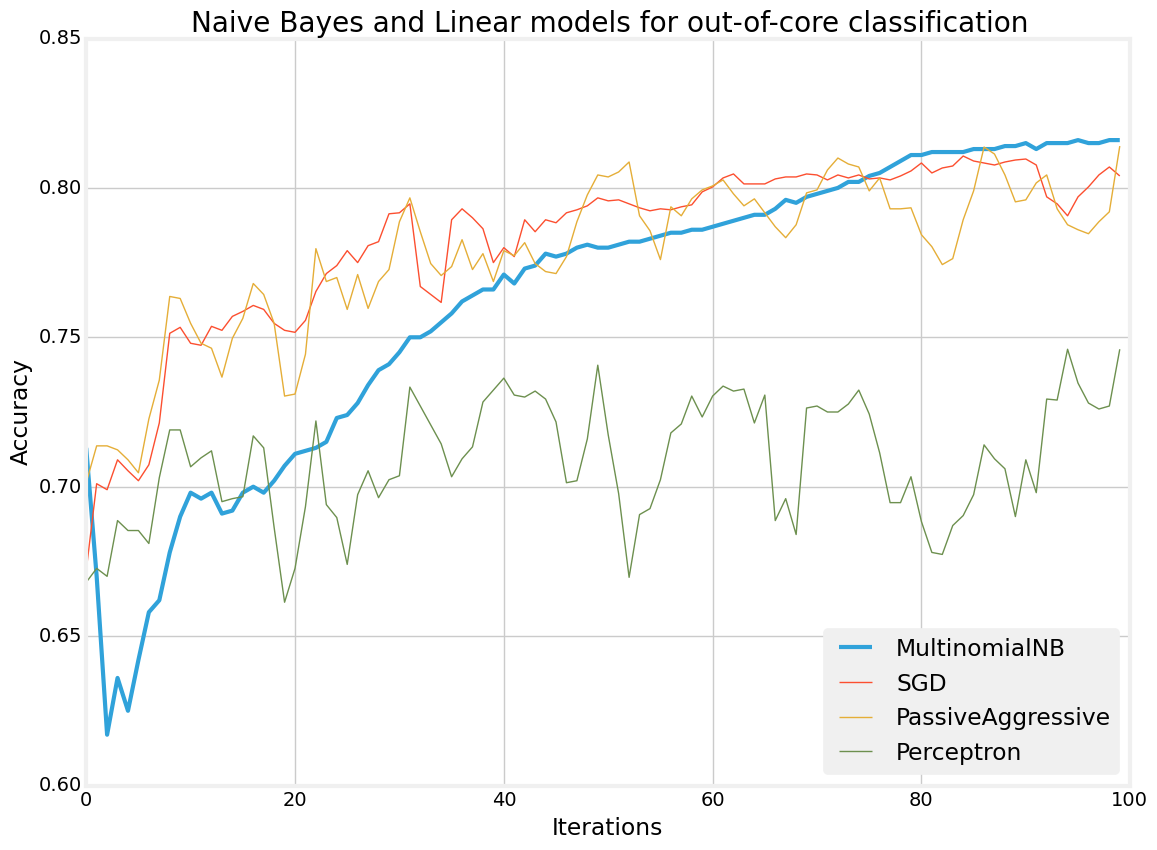

10k training 4k test

10k pages, 500 batch size, 100 iterations

NB steadily converges,

SGD, PA, Perceptron: unstable

Naive Bayes, Batch size: 10, 100 and 500

- Loading csv files

- Parsing, segmenting,

- word counts, TfIdF, hashing vectorizer

=> time consuming

@alexip

-

https://scikit-learn.org/stable/modules/scaling_strategies.html

-

Memory imprint of Python Dict https://blog.scrapinghub.com/2014/03/26/optimizing-memory-usage-of-scikit-learn-models-using-succinct-tries/

-

Dask https://github.com/dask/dask-examples/blob/master/nyctaxi-2013.ipynb

-

scikit learn https://fa.bianp.net/talks/sheffield_april_2014/#/step-19

-

https://scikit-learn.org/stable/modules/generated/sklearn.linear_model.SGDClassifier.html

-

https://github.com/szilard/benchm-ml/blob/master/0-init/1-install.txt

-

https://stackoverflow.com/questions/15036630/batch-gradient-descent-with-scikit-learn-sklearn